Recovering a site from a major spam manual action is weeks of spreadsheet work before a reconsideration request gets written.

Recovering a Spam-Penalized Site With 500+ Pages: A Realistic Guide

Recovery from a “Major spam problems – affects all pages” manual action on a 500-plus page site takes three to six months of genuine remediation work—not a weekend audit and a reconsideration click. The building inspector has walked every floor, pulled up the subfloor, and condemned the whole structure. Repainting the facade will not satisfy the inspector. Every compromised page must be substantially rewritten, merged, or removed, and every toxic link must be documented and addressed before a reconsideration request goes anywhere near the submit button. Most guides in circulation omit the part where you spend three days staring at a spreadsheet of 600 URLs deciding which ones deserve to live. This guide does not.

Step 1: Confirm the Penalty Before Touching Anything

Open Google Search Console, navigate to Security & Manual Actions, then Manual Actions. If a manual action is present, it will name the violation and specify whether it affects the entire domain or a subset of URLs. “Major spam problems – affects all pages” means sitewide—there is no surgical option.

This step matters because manual actions and algorithmic demotions require entirely different remediation paths. A manual action has a human reviewer on the other end and a formal reconsideration process. An algorithmic demotion has no reconsideration pathway; recovery depends on site changes and Google’s reprocessing windows, which can stretch across months with no feedback loop. If the GSC Manual Actions panel is clean, use a tool like Panguin to overlay your traffic drop against known algorithm update dates before assuming you are chasing a manual penalty.

Also pull GSC’s Index Coverage and Core Web Vitals reports before diving into content work. Technical problems—crawl blocks, HTTPS issues, mobile usability failures—can compound a spam penalty or masquerade as one. Rule them out early so the content audit is not contaminated by noise.

Step 2: Run the Content Audit and Apply the Three-Bin Sort

For a 500-plus page site, you cannot audit by feel. Run Screaming Frog or Sitebulb against the full domain and export every URL with its metadata: word count, title, canonical, indexability status, response code. Cross-reference that export against GSC Index Coverage data to flag pages that are excluded, erroring, or generating zero impressions.

Now apply triage flags. Pages under roughly 300 words are thin and go into the review pile immediately. Pages with 80 percent or higher content similarity to other pages on the site are duplication candidates; Siteliner automates much of this comparison at scale. Any page that reads as mass-published, template-stamped, or AI-generated without meaningful editorial layering gets flagged regardless of word count.

The decision at the end of each flag is a three-bin sort:

Fix it. Merge it. Delete it.

Fix means substantially rewriting the page with demonstrable first-hand expertise, adding author attribution, and citing credible external sources—something a human reviewer would recognize as genuinely useful. Merge means consolidating a cluster of thin, similar pages into one authoritative piece and 301-redirecting the rest. Delete means removing the page entirely and redirecting or returning a 404, depending on whether the URL carries any link equity worth preserving.

The fix option is the most labor-intensive and the most frequently abused. For location or service pages that are borderline doorway pages, target at least 80 percent content uniqueness versus the primary service page before considering a location variant worth keeping. If you cannot get there with real local signals—specific staff, local case studies, neighborhood-level detail—the page should be merged or deleted.

Step 3: Run the Backlink Audit in Parallel

While the content audit is underway, a separate workstream should be running on backlinks—not after the content work is done, in parallel with it. Export the full external link profile from GSC under Links, then supplement with exports from Ahrefs or Semrush to catch links GSC misses.

Toxic link red flags are consistent across remediation playbooks: very low domain rating, no topical relevance to the penalized site, patterns consistent with private blog networks, exact-match anchor text used at scale, and sudden acquisition spikes that do not correspond to any legitimate outreach. Any link that checks multiple boxes goes into the removal queue.

Outreach comes first. Use Hunter.io or BuzzStream to find webmaster contact information at scale and send removal requests. Document every attempt—the date, the domain, the email address used, and the response or lack thereof. This paper trail is evidence you will include in the reconsideration packet.

When outreach fails, compile the remaining domains into a disavow file and submit it through Google’s Disavow Tool. Use domain-level disavow format for efficiency. The tool is a last resort, not a first move—overusing it on legitimate links can do more harm than the toxic ones you were trying to neutralize. Budget three to five focused days for a medium-sized site’s link audit; sites with high link volumes or a high percentage of unresponsive webmasters will run longer.

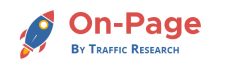

Timeline derived from the article’s ‘Phase the project accordingly’ section and corroborating recovery guides.

What Most People Get Wrong: Noindexing Spam Pages Does Not Remove Them From Google’s Quality Calculus

The misconception that derails more recoveries than any other is the belief that noindexing a bad page removes it from Google’s domain-level quality evaluation. It does not. Google may still count noindexed spam content as a site-level quality signal. The content exists on the server. Googlebot can still reach it. The noindex directive tells Google not to show the page in results—it does not tell Google to ignore the page’s existence when evaluating the overall trustworthiness of the domain.

Hiding 200 thin pages behind noindex tags while leaving them live is the SEO equivalent of covering a mold problem with drywall before a home inspection. The inspector will find it. The pages must be substantially rewritten or removed. There is no shortcut here, and the reconsideration request you eventually submit will need to demonstrate that you understood this.

The second most common mistake is submitting the reconsideration request before the work is genuinely done. Submitting early starts a review clock, burns reviewer attention, and often results in a rejection that sets the timeline back further. Do not submit until every flagged page has been actioned, every outreach attempt is logged, and every technical issue surfaced during the audit is resolved.

What to Do Next: Submit, Monitor, and Set Honest Expectations

When the work is complete, the reconsideration packet needs to answer three questions clearly: what the violation was, why it happened, and exactly what was done to fix it. Include a spreadsheet of every URL that was deleted, merged, or substantially rewritten with the action taken and the date. Attach before-and-after screenshots of overhauled pages. Include the outreach log and the disavow file. Include a crawl report showing the current state of the site’s indexable URL inventory. The narrative should be direct: explain what practices led to the penalty, and explain the controls now in place to prevent recurrence.

Typical processing time for manual-action reconsideration runs roughly 10 to 30 days, though some cases extend to six weeks and require follow-up rounds. After a successful lift, rankings do not snap back immediately—Google still needs to recrawl and re-evaluate the cleaned-up pages. Dubai SEO Services’ spam update recovery analysis recommends watching for gradual improvements in crawl frequency and index coverage as early signals that the penalty lift is taking effect, even before traffic numbers move.

For post-submission monitoring, check weekly for the first four to eight weeks: GSC indexing trends, crawl activity, key ranking positions, and organic traffic in GA4. The honest client-expectation language is that meaningful traffic movement after a properly executed recovery often takes three to six months from the start of remediation. Phase the project accordingly:

- Days 1–2: Penalty identification and diagnosis—GSC confirmation, algorithm correlation, technical baseline

- Days 3–7: Backlink audit intensive

- Days 8–14: Content audit and triage flagging

- Weeks 3–6: Content remediation—rewrites, merges, deletions, redirect implementation

- Weeks 5–7: EEAT rebuilding—author bios, citations, editorial process documentation

- Week 7–8: Reconsideration packet assembly and submission

- Weeks 9–16: Monitor, respond to any follow-up review requests, track recovery signals

This is unglamorous work. It is spreadsheet work, outreach work, rewrite work, and waiting work. The sites that recover are the ones where someone did all of it, documented all of it, and resisted the temptation to cut corners on the parts that are invisible to the client but visible to the reviewer. The building inspector does not care how the facade looks. They want to see the structure is sound.