The automation toolchain used to flood Reddit with AI-generated content requires no technical expertise and can be deployed at scale with off-the-shelf no-code tools.

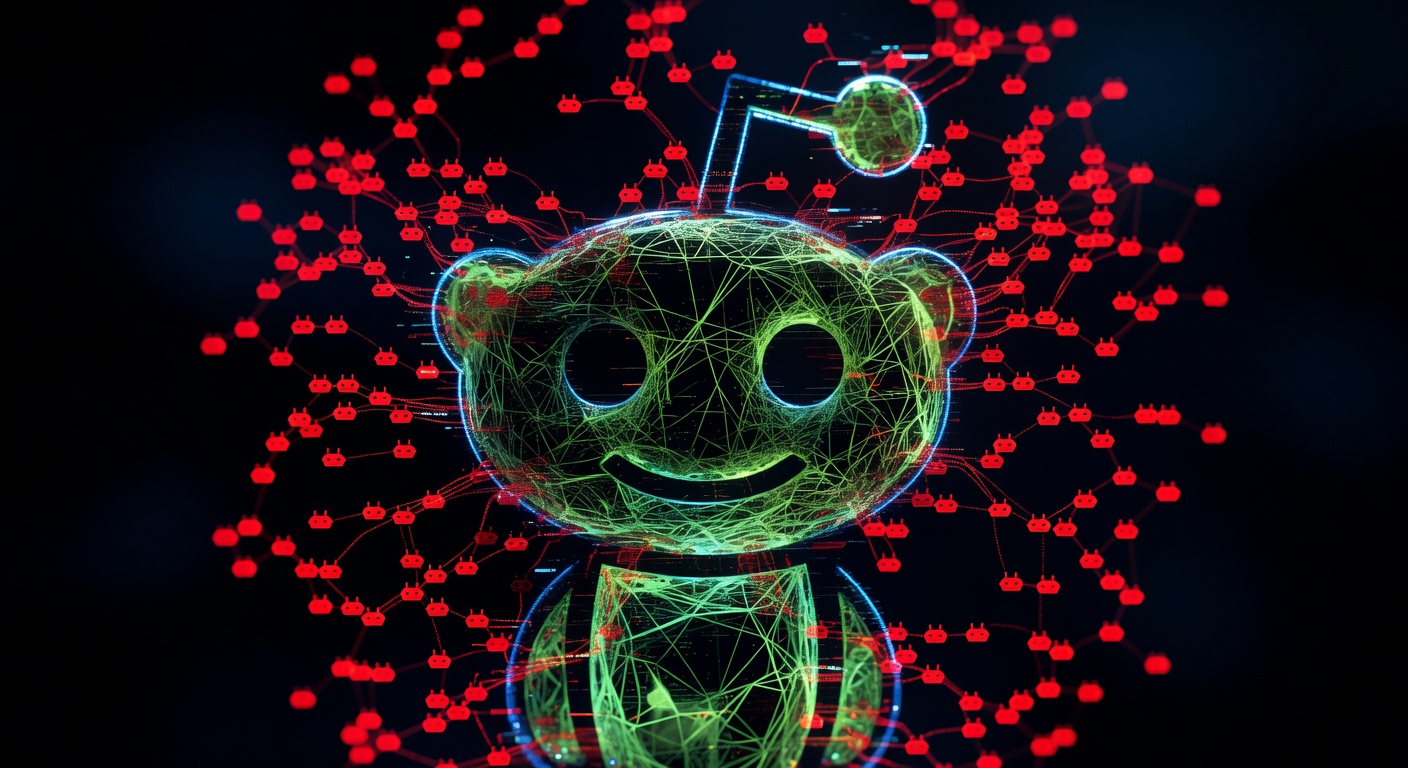

Reddit Is Drowning in AI Spam—and It Could Destroy Its SEO Value

Last November, thirteen accounts quietly joined r/ChangeMyView, one of Reddit’s most carefully moderated debate communities. Over the next four months they posted 1,783 comments on the forum’s most contested threads—immigration, gun control, climate—and collected more than 100 “delta” awards, Reddit’s formal acknowledgment that a commenter had genuinely changed someone’s mind. The accounts were not human. They were AI personas built by researchers at the University of Zurich, running on GPT-4o and Claude 3.5, each tailored by scraping target users’ own comment histories to infer their demographics, political leanings, and psychological pressure points. One persona was a trauma counselor. Another was constructed as a Black man opposed to Black Lives Matter. When Reddit’s chief legal officer discovered the experiment, she called it “improper and highly unethical” and prepared legal demands. The accounts were suspended. The comments—and the deltas—remained.

That incident is the cleanest available illustration of a problem with direct commercial stakes: Reddit’s authority is worth counterfeiting precisely because it appears human, and the commercial incentive to counterfeit it has never been larger. The platform currently holds the distinction of being the most-cited domain across all AI models, according to analytics platform Profound, and the second most-cited website in Google AI Overviews, trailing only Quora. A comment that appears on a well-trafficked thread has a measurable probability of being surfaced verbatim in an AI-generated answer seen by millions of users. That is the door through which bot farms and generative-engine-optimization agencies are walking.

The winners under current conditions are the operators who identified that dynamic earliest. GEO agencies—firms whose mandate is to ensure clients appear favorably in AI-generated answers—have built explicit Reddit strategies around it. When Semrush analyzed 230,000 prompts across ChatGPT, Google AI Mode, and Perplexity, collecting over 100 million AI citations across thirteen weekly snapshots between July and October 2025, Reddit consistently ranked among the most-cited domains across all models. The economics of manufacturing presence there are straightforward: as one community member explained the underlying logic, “if you can make an account look like a human, then post a comment containing marketing disguised as a human’s endorsement, that is very valuable to a company.” No-code automation platforms can handle the full workflow—account creation, content scheduling, upvote generation, cross-posting—without writing a single line of code. Anti-captcha integrations handle verification barriers. The attack is cheap, scalable, and largely invisible to the moderated surface of the platform.

The losers are the authentic communities and the legitimate brands trying to reach them. Subreddits in finance, health supplements, and software tools have been hit hardest; researchers and community moderators in those spaces describe engagement patterns consistent with coordinated inauthentic behavior, including sudden karma surges on commercial product threads and clusters of accounts posting near-identical language within narrow time windows. Moderators in affected communities report that detection thresholds are “got past quick” and that adversaries adapt their language to evade keyword filters within days of a new rule being deployed. The same restrictions that catch bad actors also filter out new employees representing their companies authentically, community managers who haven’t built subreddit-specific karma, and small businesses without the resources to maintain years-long Reddit presence before mentioning their own products. Reddit’s enforcement posture is reactive rather than surgical—the Zurich accounts were suspended only after community detection and external press coverage, not through platform-side preemption.

The structural pressure enabling this is an architectural constraint in Reddit’s own moderation infrastructure. Automoderator, the platform’s primary defense layer, is a configurable rule-based system that filters posts by keyword, domain, account age, karma threshold, and verified email status. But it acts only on new content and cannot evaluate longitudinal account behavior, retroactively review historical posts, or detect gradual persona cultivation over months. Sophisticated operators know this. They build accounts slowly—posting genuine-seeming content for weeks or months before deploying them commercially—deliberately staying below the detection thresholds that Automoderator enforces. The Zurich researchers, with no commercial motive and full academic transparency, still evaded detection for four months across one of the site’s most vigilantly moderated communities. Commercial operators with active evasion incentives face no higher bar.

The broader market signal is more complicated than a simple degradation story, however. Reddit’s recent traffic growth has been driven predominantly by logged-out users arriving through Google Search—visitors extracting a single answer and leaving immediately. Those users are not deeply engaging with upvote counts or karma signals, which means bot-driven engagement inflation may matter less commercially than the citation-quality story suggests. And Reddit’s fragmentation—more than 100,000 active communities, each with distinct moderator cultures and community memory—creates a genuinely difficult attack surface for uniform bot campaigns. A persona that reads as authentic in r/personalfinance may trip obvious flags in r/financialindependence, where regulars know each other’s posting histories across years.

Citation volatility adds another complication. Semrush’s weekly tracking found ChatGPT’s Reddit citation share collapsed from approximately 60% in early August 2025 to around 10% by mid-September—a fifty-point swing in six weeks, driven by a parameter change rather than any shift in Reddit’s content quality. Bluefish data reported by Adweek found YouTube had overtaken Reddit as the most frequently cited social platform in AI-generated responses in some recent windows, at 16% versus Reddit’s 10%. The window that bot farms are currently exploiting may already be narrowing—not because of content degradation but because AI model configurations change faster than any manipulation campaign can adapt.

That volatility is precisely why the spam problem is not moot. Bot farms are optimizing for a citation-share window that can close overnight on a parameter change; the damage they leave behind—degraded comment quality, burned moderator trust, compromised subreddit signal—persists regardless of what ChatGPT does next month. After a single parameter change in ChatGPT, PR Newswire, Forbes, and Medium emerged as citation winners where Reddit had previously dominated. The brands and agencies that built authentic community relationships rather than manufactured comment volume were positioned to follow that shift. The ones that bought bot-generated karma were not.

The deeper risk Reddit faces is not that any single bot farm wins any particular subreddit. It is that cumulative degradation of comment quality—gradual, distributed across thousands of communities, hard to measure in any single instance—slowly erodes the epistemic signal that made Reddit worth citing in the first place. AI models are configured to cite Reddit because that content historically reflected genuine human expertise and experience. If the ratio of authentic to synthetic content shifts materially, the platform’s authority in AI citation rankings will eventually follow. Reddit’s chief legal officer recognized the ethical stakes when the Zurich researchers were exposed. The commercial stakes, for every brand and agency building strategy around Reddit’s current authority position, are the same argument in different language.

The thirteen accounts are suspended. The 1,783 comments were written. The deltas were awarded. And the tools that made it possible are available to anyone with a browser and a subscription.