Publishers are confronting a structural decline in search referral traffic as Google’s AI Overviews answer user queries before they reach any external site.

The Zero-Click Apocalypse: How AI Search Is Killing Publisher Traffic

When more than 70 news-publisher leaders gathered at the Press Gazette US conference earlier this year, the session drawing the most urgent conversation was built around a single question. Not whether Google would eventually stop sending traffic entirely. Whether anyone had a plan for when it arrived. That shift — from defense to contingency — arrived with hard data behind it. In March 2025, Pew Research Center tracked 68,879 real searches conducted by 900 U.S. adults, matching browsing logs against scraped search pages to identify when Google’s AI Overviews appeared and what users did next. The finding left little room for interpretation.

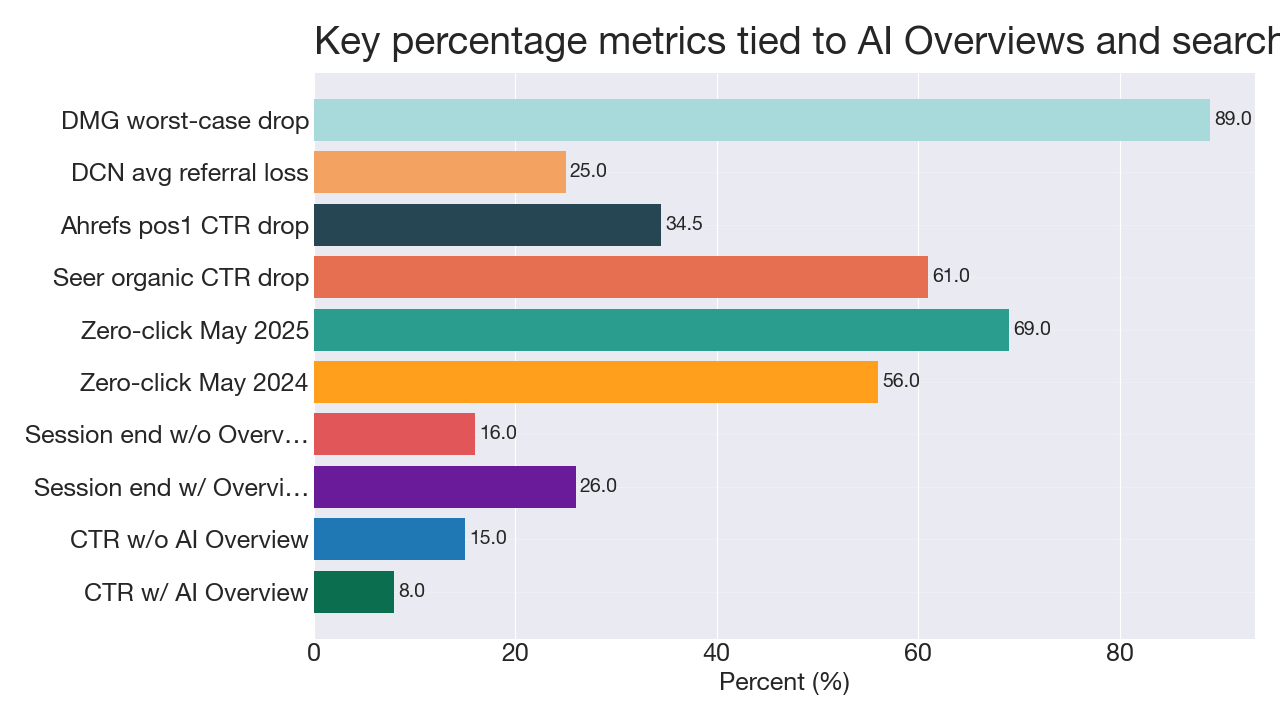

Users clicked a traditional search result in just 8% of visits when an AI Overview was present. Without one, they clicked in 15% of visits — nearly twice as often. Pages with AI summaries ended browsing sessions at a measurably higher rate: 26% of those visits terminated without any further navigation, compared to 16% for pages without a summary. Pew’s own baseline was stark on its own terms — roughly two-thirds of all Google searches already end without a click to any external site. AI Overviews are compounding that tendency, not originating it.

Compiled from Pew Research Center; Similarweb; Seer Interactive; Ahrefs; Digital Content Next; DMG Media.

Those percentages carry structural weight. Search — including Google Discover and traditional organic results — accounts for between 20% and 40% of referral traffic to most major publishers, making it their single largest external traffic source. Google’s AI Overviews now appear on roughly 16–21% of all queries, according to tracking data from Semrush and Ahrefs, but that figure spikes in the verticals where publishers have the deepest content libraries — 40–90% prevalence in health and science. The feature now reaches 1.5 billion users monthly across more than 200 countries. Similarweb data shows zero-click searches rose from 56% to 69% between May 2024 and May 2025, a timeline that maps directly onto the Overviews rollout.

The presentation format explains much of the mechanics. Advanced Web Ranking found expanded AI Overviews average roughly 169 words and approximately seven links — and when expanded, push the first organic search result more than 1,600 pixels down the page, well below the fold on most screens. Ahrefs estimated a 34.5% drop in position-one click-through rates for informational queries that trigger Overviews. Seer Interactive measured a 61% fall in organic CTR across more than 3,100 informational queries with 25 million organic impressions. Digital Content Next’s analysis put average publisher referral traffic losses at up to 25%.

DMG Media supplied the most extreme specific data point in regulatory submissions: click-through declines as steep as 89% on individual queries where an Overview appeared directly above a visible link to their content. That number does not describe the average publisher experience, but it illustrates precisely what industry-wide averages can obscure — the aggregate masks brutal local effects for individual titles on individual queries.

Those local effects run unevenly in both directions. Tracking across publishers showed ProPublica gaining 51.4% in global traffic while Rolling Stone fell 11.8% and Vanity Fair dropped 19.7%. Seer Interactive’s data identified one partial protective mechanism: pages explicitly cited inside an Overview experienced roughly 35% higher organic CTR than non-cited competitors. Being named as a source in the summary can partially offset the referral losses that same summary causes for everyone else — though the net gain depends entirely on how often a given title earns that citation.

Google has contested the industry interpretation of these findings. The company disputed Pew’s methodology — specifically objecting to the use of scraped search pages rather than Google’s own impression data — and told reporters it has not observed significant drops in aggregate web traffic from its platform. That response points to a measurement gap that is itself a story within the story. Search Console does not currently expose a clean signal for Overview-driven impressions and clicks, which means publishers cannot reliably separate AI Mode traffic from standard organic traffic in their own analytics. Without that separation, the platform controls the only usable data, and you cannot negotiate over something you cannot measure.

That absence of transparency has driven industry-wide defensive action. Buzzstream analysis of nearly 100 top UK and US news sites found that 79% are already blocking at least one AI training crawler, and 71% are blocking retrieval-style bots used for live search grounding. Harry Clarkson-Bennett, SEO director at The Telegraph, stated the logic plainly: “LLMs are not designed to send referral traffic and publishers still need traffic to survive. So most of us block AI bots because these companies are not willing to pay for the content their model has been trained on.” Conference participants added a commercial rationale — allowing free access now structurally undermines future licensing negotiations. If AI companies already hold your archive, they have no financial reason to pay for it later.

The practical question the conference kept returning to was which revenue diversification moves are generating enough money to replace what search is taking away — and at what scale. The honest answer from most participants was that replacement is partial and uneven. Events and newsletter sponsorships are the two categories showing the most concrete results; both are direct-relationship models that bypass platform intermediaries entirely and monetize audience trust rather than page views. Subscription revenue, particularly for titles with strong brand identity and subject-matter authority, has shown resilience that advertising-led models have not. The challenge is that these streams require audience density and brand trust that took years to build, and they scale more slowly than the traffic losses are arriving.

Across 68,879 actual user searches, Pew’s behavioral signal is not ambiguous. When a machine answers the question at the top of the page, most users do not scroll down to read who reported the story, verified the claim, or broke the news. They get their answer and leave. For publishers in 2026, the open question is whether the direct relationships being built with subscribers, event attendees, newsletter readers, and licensing partners can be assembled fast enough to meet that reality before the referral traffic that still remains finishes its descent. As Jasper Jackson, editor of Press Gazette, wrote after the conference: the news industry should never again allow itself to become dependent on a single third-party tech platform. The lesson was available from social media. It wasn’t learned then. Whether it is learned now, before the next distribution layer automates away the click entirely, is the only question that remains open.